Cybersecurity Snapshot: Cyber Agencies Offer Secure AI Tips, while Stanford Issues In-Depth AI Trends Analysis, Including of AI Security

Check out recommendations for securing AI systems from the Five Eyes cybersecurity agencies. Plus, Stanford University offers a comprehensive review of AI trends. Meanwhile, a new open-source tool aims to simplify SBOM usage. And don’t miss the latest CIS Benchmarks updates. And much more!

Dive into six things that are top of mind for the week ending April 19.

1 - Multinational cyber agencies issue best practices for secure AI deployment

Looking for best practices on how to securely deploy artificial intelligence (AI) systems? Check out the AI security recommendations jointly published this week by cybersecurity agencies from the Five Eyes countries: Australia, Canada, New Zealand, the U.K. and the U.S.

“Deploying AI systems securely requires careful setup and configuration that depends on the complexity of the AI system, the resources required (e.g., funding, technical expertise), and the infrastructure used (i.e., on premises, cloud, or hybrid),” reads the 11-page document.

The guide, titled “Deploying AI Systems Securely,” is aimed at organizations deploying and operating externally developed AI systems – whether the deployments are on-premises or in private cloud environments.

It focuses on three main areas of AI system security:

- Securing the deployment environment through, for example, solid governance, a well-designed architecture and hardened configurations

- Continuously protecting the AI system by, for example, securing exposed APIs and actively monitoring AI model behavior

- Securing the AI system’s operation and maintenance through, for example, strict access controls; audits and penetration testing; and regular updates and patches

“The authoring agencies advise organizations deploying AI systems to implement robust security measures capable of both preventing theft of sensitive data and mitigating misuse of AI systems,” the document reads.

The main goals of the joint guidance are to help organizations:

- Enhance AI systems’ confidentiality, integrity, and availability

- Effectively mitigate known vulnerabilities in AI systems

- Flag and respond to attacks against AI systems and their data and services using appropriate methods and controls

For more information about deploying AI systems securely:

- “OWASP AI Security and Privacy Guide” (OWASP)

- “5 steps to make sure generative AI is secure AI” (Accenture)

- “Securing AI Makes for Safer AI” (CSET)

- “How to manage generative AI security risks in the enterprise” (TechTarget)

- “Security threats of AI large language models are mounting, spurring efforts to fix them” (Silicon Angle)

2 - Stanford offers exhaustive analysis of AI trends and challenges

If you’re tasked with monitoring the fast-evolving AI landscape, you might want to take a look at Stanford University’s “Artificial Intelligence Index Report 2024.” At about 500 pages, it’s an all-encompassing deep dive into today’s key AI issues.

“The AI Index report tracks, collates, distills, and visualizes data related to AI,” reads the report’s introduction.

Aimed at a broad audience, including policymakers, researchers and executives, the report seeks to help readers get “a more thorough and nuanced understanding of the complex field of AI.”

The report, divided into nine chapters, covers topics including research and development; technical performance; responsible AI; and policy and governance.

Chapter 3, titled “Responsible AI,” is likely the most relevant for cybersecurity leaders and practitioners.

“This chapter explores key trends in responsible AI by examining metrics, research, and benchmarks in four key responsible AI areas: privacy and data governance, transparency and explainability, security and safety, and fairness,” reads Chapter 3’s “Overview” section.

Some of this chapter’s main takeaways include:

- The AI industry needs rigorous and standardized benchmarks for assessing the level of responsibility of large language models (LLMs).

- Researchers are discovering newer, subtler ways of maliciously manipulating LLMs, unearthing more complex vulnerabilities in these systems.

- AI developers offer little transparency into their work, especially in areas like training data and methods, which makes it difficult to evaluate the safety of their systems.

- Incidents of malicious AI use are rising quickly.

- Businesses are becoming more aware of AI risks, and have started to mitigate them, but most of these efforts are in the early stages.

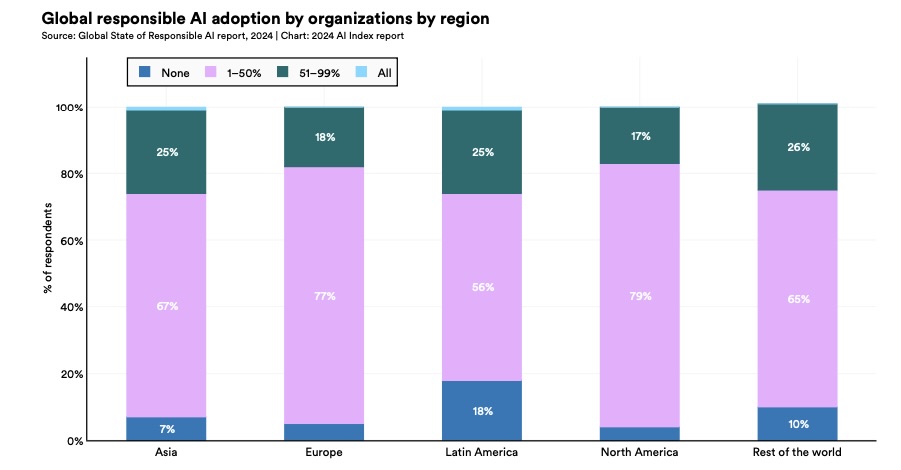

To illustrate the last insight, the chart below shows what percentage of surveyed organizations have adopted none (dark blue bar); at least one (pink bar); more than half (green bar); or all (light blue bar) of these AI risk mitigation measures:

- Fairness

- Transparency and explainability

- Privacy and data governance

- Reliability

- Security

(Source: 1,000-plus organizations polled by Stanford University and Accenture, February-March 2024)

Meanwhile, Chapter 7 is devoted to AI policy and governance, and includes information about the AI regulatory landscape that’s also relevant for cybersecurity teams.

To get more details:

- Check out the report’s highlights page

- Dive into the full “Artificial Intelligence Index Report 2024” report

3 - OpenSSF launches open source SBOM tool

Are you involved with software bills of materials (SBOMs) in your organization? If so, you might want to check out Protobom, a new free tool that the Open Source Security Foundation (OpenSSF) launched this week.

Because an SBOM lists the “ingredients” that make up a software program, it can help IT and security teams identify whether and where a vulnerable component is present in their organizations’ applications, operating systems and other related systems.

However, multiple obstacles hinder the adoption and usage of SBOMs, and Protobom is designed to help with one of them: the multiple SBOM data formats and identification schemes.

“Protobom aims to mitigate this issue by offering a format-neutral data layer on top of the standards that lets applications work seamlessly with any kind of SBOM,” reads the OpenSSF announcement.

The OpenSSF developed the open source tool in collaboration with the U.S. Cybersecurity and Infrastructure Security Agency (CISA), the Department of Homeland’s Security Science and Technology Directorate (S&T) and seven startup companies.

OpenSSF hopes that Protobom, which can be integrated into commercial and open source applications, will encourage SBOM adoption and simplify the creation and usage of SBOMs.

To get more details, check out:

- The Protobom announcement “CISA, DHS S&T and OpenSSF Announce Global Launch of Software Supply Chain Open Source Project”

- The Protobom home page and Github page

For more information on SBOMs:

- “CISA, NSA push SBOM adoption to beef up supply chain security” (Tenable)

- “What is a software bill of materials (SBOM)? And will it secure supply chains?” (SDX Central)

- “How to create an SBOM, with example and template” (TechTarget)

- “How Organisations Can Leverage SBOMs to Improve Software Security” (Infosecurity Europe)

- “SBOMs and security: What IT and DevOps need to know” (TechTarget)

VIDEOS

An SBOM Primer: From Licenses to Security, Know What’s in Your Code (Linux Foundation)

SBOM Explainer: What Is SBOM? Part 1 (NTIA)

4 - CIS updates Benchmarks for Cisco, Google, Microsoft, VMware products

The Center for Internet Security has announced the latest batch of updates for its widely-used CIS Benchmarks, including new secure-configuration recommendations for Cisco IOS, Google Cloud Platform, Windows Server and VMware ESXi.

Specifically, these CIS Benchmarks were updated in March:

- CIS Cisco IOS XE 16.x Benchmark v2.1.0

- CIS Cisco IOS XE 17.x Benchmark v2.1.0

- CIS Debian Linux 11 Benchmark v2.0.0

- CIS Google Cloud Platform Foundation Benchmark v3.0.0

- CIS MariaDB 10.6 Benchmark v1.1.0

- CIS Microsoft SQL Server 2022 Benchmark v1.1.0

- CIS Microsoft Windows Server 2019 Benchmark v3.0.0

- CIS Microsoft Windows Server 2022 Benchmark v3.0.0

- CIS PostgreSQL 13 Benchmark v1.2.0

- CIS PostgreSQL 14 Benchmark v1.2.0

- CIS Ubuntu Linux 18.04 LTS Benchmark v2.2.0 — Final Release

- CIS Ubuntu Linux 22.04 LTS Benchmark v2.0.0

- CIS VMware ESXi 6.7 Benchmark v1.4.0 — Final Release

- CIS VMware ESXi 7.0 Benchmark v1.4.0

- CIS VMware ESXi 8.0 Benchmark v1.1.0

In addition, CIS released brand new Benchmarks for GitLab, Google Chrome Browser Cloud Management and MariaDB.

CIS Benchmarks are secure-configuration guidelines for hardening products against cyberattacks. Currently, there are more than 100 CIS Benchmarks for 25-plus vendor product families. CIS offers Benchmarks for cloud platforms; databases; desktop and server software; mobile devices; operating systems; and more.

To get more details, read the CIS blog “CIS Benchmarks April 2024 Update.” For more information about the CIS Benchmarks list, check out its home page, as well as:

- “Getting to Know the CIS Benchmarks” (CIS)

- “Security Via Consensus: Developing the CIS Benchmarks” (Dark Reading)

- “How to Unlock the Security Benefits of the CIS Benchmarks” (Tenable)

- “CIS Benchmarks Communities: Where configurations meet consensus” (Help Net Security)

- “CIS Benchmarks: DevOps Guide to Hardening the Cloud” (DevOps)

VIDEO

CIS Benchmarks (CIS)

5 - A temperature check on cloud security

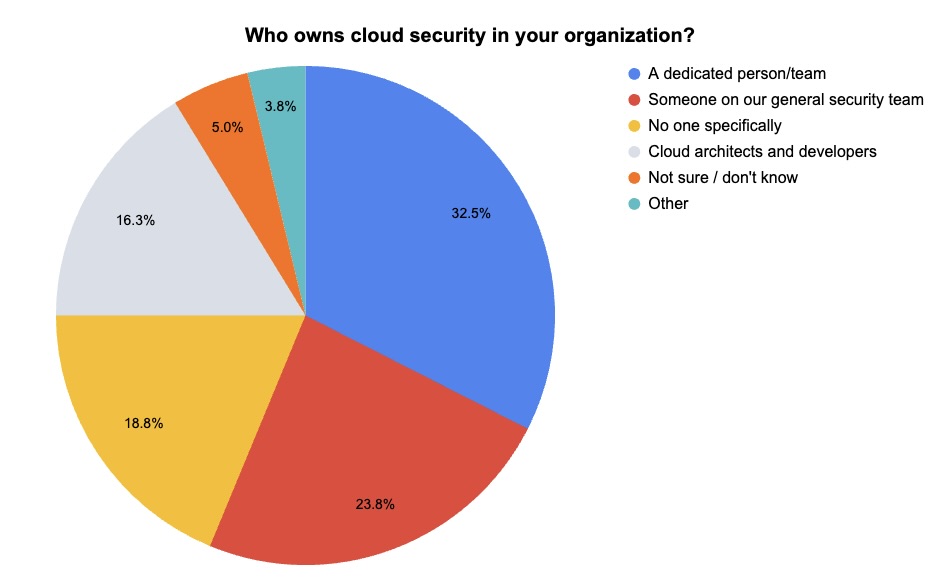

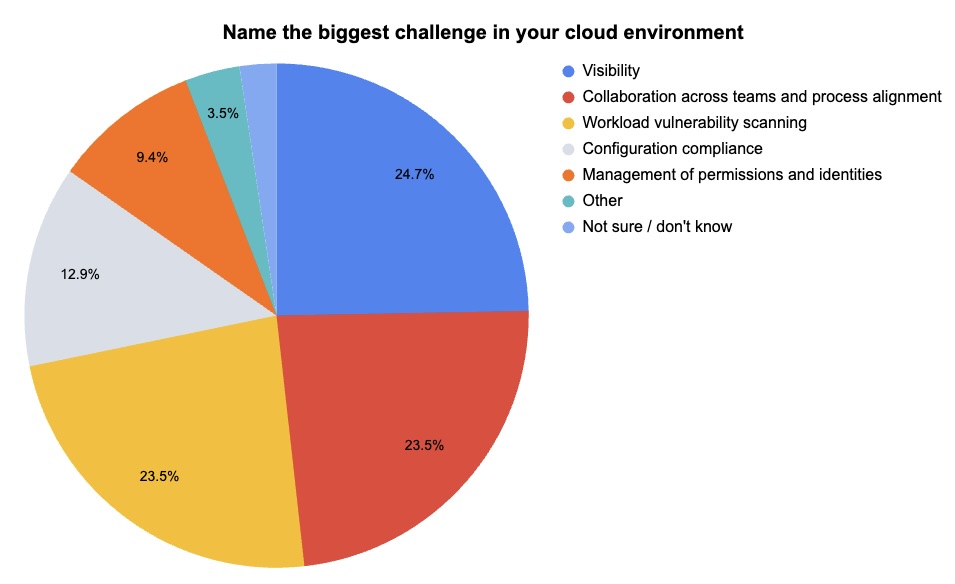

At our recent webinar “How to Make Your Security Team Experts In Cloud Security In Less Than 48 Hours,” we polled attendees on cloud security issues. Check out what they said about who’s in charge of cloud security in their team and about their organizations’ biggest cloud security challenge.

(80 webinar attendees polled by Tenable, March 2024)

(85 webinar attendees polled by Tenable, March 2024)

Want to learn how to find, prioritize, and remediate vulnerabilities in operating systems, container images, virtual machines, and identities without adding complexity? Watch the on-demand webinar “How to Make Your Security Team Experts In Cloud Security In Less Than 48 Hours.” Topics include:

- Asset inventorying, vulnerability identification and remediation prioritization in AWS, Azure and Google Cloud

- Managing security of container images and Kubernetes workloads

- Unifying vulnerability management across on-premises and cloud environments

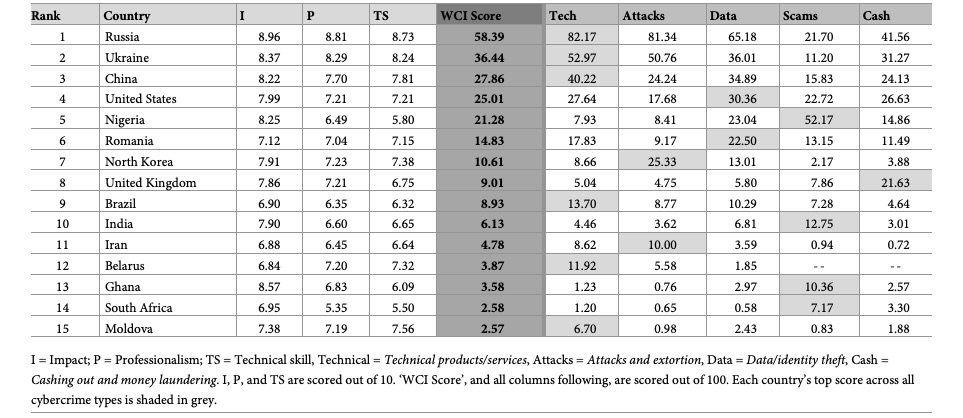

6 - Study: Russia ranks first in profit-driven cybercrime

A study about the world’s top geographic hotspots for financially-motivated cybercrime has ranked Russia first, followed by Ukraine, China, the U.S. and Nigeria.

The findings come from researchers at the University of Oxford, the University of Canberra, the Paris Institute of Political Studies and Monash University. They compiled the first World Cybercrime Index (WCI) to shed light on which countries are the biggest sources of profit-driven cybercrime.

“By contributing to a deeper understanding of cybercrime as a localised phenomenon, the WCI may help lift the veil of anonymity that protects cybercriminals and thereby enhance global efforts to combat this evolving threat,” the researchers wrote in the article “Mapping the global geography of cybercrime with the World Cybercrime Index.”

World Cybercrime Index: Top 15 Countries

(Source: “Mapping the global geography of cybercrime with the World Cybercrime Index” research article from University of Oxford and the University of Canberra, April 2024)

The study also identified the five major types of global cybercrime:

- Technical products and services, such as malware coding and botnet access

- Attacks and extortion, including DDoS attacks and ransomware

- Data and identity theft, including hacking, phishing and credit card comprises

- Scams, such as business email compromise and online auction fraud

- Cashing out and money laundering, including credit card fraud and money mules

“We now have a deeper understanding of the geography of cybercrime, and how different countries specialise in different types of cybercrime,” study co-author Miranda Bruce from the University of Oxford said in a statement.

VIDEO

Mapping the global geography of cybercrime (University of Oxford)

Learn more

- Center for Internet Security (CIS)

- Cloud

- Cybersecurity Snapshot

- Exposure Management

- Federal

- Government

- Microsoft Windows

- Public Policy

- Risk-based Vulnerability Management

Tenable One

Request a demo

The world’s leading AI-powered exposure management platform.

Thank You

Thank you for your interest in Tenable One.

A representative will be in touch soon.

Form ID: 7469

Form Name: one-eval

Form Class: c-form form-panel__global-form c-form--mkto js-mkto-no-css js-form-hanging-label c-form--hide-comments

Form Wrapper ID: one-eval-form-wrapper

Confirmation Class: one-eval-confirmform-modal

Simulate Success